Next: About this document ...

Up: lab_template

Previous: lab_template

Subsections

Suppose that  is a differentiable function and that

is a differentiable function and that  is some fixed number in the domain of

is some fixed number in the domain of  . We define the linear approximation to

. We define the linear approximation to  at

at

by the equation

by the equation

In this equation, the parameter  is called the base point, and

is called the base point, and  is the independent variable. You may recognize the equation as the equation of the tangent line at the point

is the independent variable. You may recognize the equation as the equation of the tangent line at the point  . It is this line that will be used to make the linear approximation.

For example if

. It is this line that will be used to make the linear approximation.

For example if  , then

, then  would be the line tangent to the parabola at

would be the line tangent to the parabola at

> f:=x->x^2;

> fT1:=D(f)(1)*(x-1)+f(1);

> plot({f(x),fT1},x=-1..3);

Obviously the two things the function and the tangentline have in common at  are their y-value and their slope.

Looking at the plot, the line will approximate the function exactly at the base point a and the approximation will be close if you stay close to that base point. To see how far off the approximation becomes on the given interval of the plot it is easy to see that the largest errors occur at the ends of the interval, i.e.

are their y-value and their slope.

Looking at the plot, the line will approximate the function exactly at the base point a and the approximation will be close if you stay close to that base point. To see how far off the approximation becomes on the given interval of the plot it is easy to see that the largest errors occur at the ends of the interval, i.e.  and

and  . Simply subtract the y-values to calculate the error.

. Simply subtract the y-values to calculate the error.

> abs(f(-1)-subs(x=-1,fT1));

> abs(f(3)-subs(x=3,fT1));

Notice that the error grows to four at each end of the interval.

How could you find an interval such that the greatest error will be a specific value? Think about this as it will appear in the exercises.

Many problems in mathematics, science, engineering, and business eventually come down to finding the roots of a nonlinear equation. It is a sad fact of life that many mathematical equations cannot be solved analytically. You already know about the formula for solving quadratic polynomial equations. You might not know, however, that there are formulas for solving cubic and quartic polynomial equations. Unfortunately, these formulas are so cumbersome that they are hardly ever used. Even more unfortunately, it has been proven that no formula can exist for finding roots of quintic or higher polynomials. Furthermore, if your equations involve trig functions, then it is even easier to find equations that do not have analytical solutions. For example, the following simple equation cannot be solved to give a formula for x.

The need to solve nonlinear equations that cannot be solved analytically has led to the development of numerical methods. One of the most commonly used numerical methods is called Newton's method or the Newton-Raphson method. The idea of Newton's method is relatively simple. Suppose you have a nonlinear equation of the form

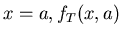

where  is a differentiable function. Then the idea of Newton's method is to start with an initial guess

is a differentiable function. Then the idea of Newton's method is to start with an initial guess

for the root and to use the tangent line to

for the root and to use the tangent line to  at

at

to approximate

to approximate  . The equation for the tangent line appears below.

. The equation for the tangent line appears below.

If the tangent line is a good approximation to  , then the

, then the  intercept of the tangent line should be a good approximation to the root. Call this value of

intercept of the tangent line should be a good approximation to the root. Call this value of  where the tangent line intersects the

where the tangent line intersects the  axis

axis

. We can solve the equation above to get the following formula.

. We can solve the equation above to get the following formula.

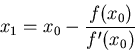

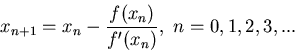

In practice, unless the starting point

is very close to the root, the value

is very close to the root, the value

is not close enough to the root we are seeking, so Newton's method is applied again using the tangent line at

is not close enough to the root we are seeking, so Newton's method is applied again using the tangent line at

. This process can be repeated, leading to a sequence of values where the value of

. This process can be repeated, leading to a sequence of values where the value of

is determined from the equation

is determined from the equation

For example, consider the equation from above,

If you want to solve this equation using Newton's method, the first thing to do is to write it in standard form as

Then, plot the expression to get an idea of where the roots are. You may have to adjust the plot range to locate all of the roots. The following commands show that there are exactly three roots, one at  , and two others at about 2 and -2.

, and two others at about 2 and -2.

> f:=x->sin(x)-x/2;

> plot(f(x),x=-6..6);

It isn't very hard to write a Maple command that will do one step of Newton's method. The examples below show a very simple method for doing so, using the function  defined above and a starting value of

defined above and a starting value of  . Further iterations can be obtained by using composition, as shown below

. Further iterations can be obtained by using composition, as shown below

> newt:=x->x-f(x)/D(f)(x);

> newt(2.2);

> newt(newt(2.2));

> (newt@@3)(2.2);

However, to simplify things for you, two commands, Newton and NewtonPlot, have been programmed for you. The Newton command takes three arguments: the function, the starting value, and the number of iterations. See the example below for three iterations. Note that these commands are part of the CalcP7 package, so you must load the package first.

> with(CalcP7):

> Newton(f(x),x=2.2,3);

> NewetonPlot(f(x),x=2.2);

One of the problems with Newton's method is knowing when to stop. With a numerical method, you can never get a root exactly, but only a numerical approximation to the root. There are basically two measures of how good your approximation to the root is. One is the absolute value of  . If this number is less than a certain tolerance, then you should have a good approximation to the root. The other measure is the change in the value of

. If this number is less than a certain tolerance, then you should have a good approximation to the root. The other measure is the change in the value of

. If the value of

. If the value of

and

and

are very close, then this can also be a criterion for stopping. You should go back to the example above and experiment with changing the number of iterations.

are very close, then this can also be a criterion for stopping. You should go back to the example above and experiment with changing the number of iterations.

The worst thing about Newton's method is that it may fail to converge. The key to getting Newton's method to converge is to select a good starting value. The best way to do this is to plot the function and determine approximately where the roots are. Then, use these values to start Newton's method.

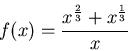

- In your scientific studies you find that your results follow the function

- You want to evaluate data around the base point

but you don't want to work with such a messy function. What is the linear approximation to this function at this base point? Plot the function and its linear approximation over the interval

but you don't want to work with such a messy function. What is the linear approximation to this function at this base point? Plot the function and its linear approximation over the interval

.

.

- You want to make sure that your linear approximation never is in error greater than 0.01. What is the interval of x data points that you can work with? (Use the fsolve command so you can find each interval endpoint) Plot the function and the linear approximation on the interval that you found, then plot the error bounds and the error function on the same graph over the interval you found.

- Consider the equation

- Plot both functions on the same graph to see how many times they intersect.

- Approximate each solution by using the Newton command from the background with various starting values until you are able to find all solutions. For each solution you find, state how many iterations were needed to approximate the solution with error no greater than

.

.

Next: About this document ...

Up: lab_template

Previous: lab_template

Dina Solitro

2006-02-08

![]() and

and

![]() are very close, then this can also be a criterion for stopping. You should go back to the example above and experiment with changing the number of iterations.

are very close, then this can also be a criterion for stopping. You should go back to the example above and experiment with changing the number of iterations.